Read the full report here.

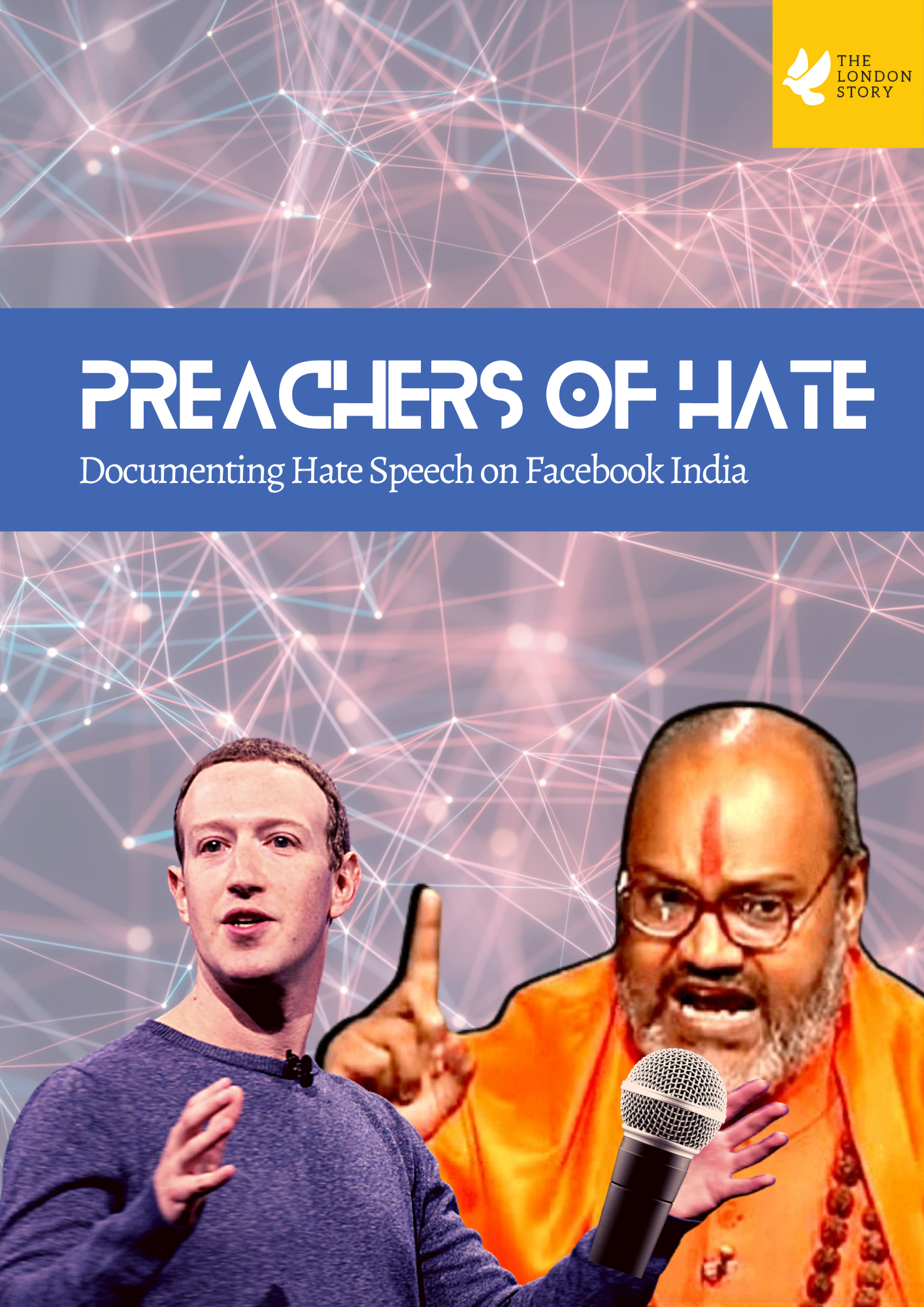

With almost 350 million users, India is Meta’s largest user market. Yet, Meta has blatantly failed to address hate content and hateful actors targeting religious minorities in India. We release this report in the backdrop of Meta’s Global Human Rights Impact Assessment (HRIA) Report released on 14th July 2022. The release of Global HRIA is in light of the United Nations Guiding Principles on Business and Human Rights (UNGP-BHR).

Principle 13 of UNGP-BHR states that corporations have the “responsibility to respect human rights” and are required to “(a) avoid causing or contributing to adverse human rights impacts through their own activities, and address such impacts when they occur; (b) seek to prevent or mitigate adverse human rights impacts that are directly linked to their operations, products or services by their business relationships, even if they have not contributed to those impacts.”

The UNGP BHR make clear that “business enterprise’s ‘activities’ are understood to include both actions and omissions; and its ‘business relationships’ are understood to include relationships with business partners, entities in its value chain, and any other non-State or State entity directly linked to its business operations, products or services.”

Principle 14 of the UNGP-BHR clearly lay that the responsibility to respect human rights is proportional to, among other things, the size, scale, scope and irremediable character of the enterprise’s impact on human rights. Therefore, the severity of the impact is to be judged – not just the size of the enterprise.

In order to validate their commitment to human rights, the UNGP-BHR mandate that enterprises must ‘adopt a policy commitment to BHR’ – which Meta did – and conduct human rights due diligence by assessing actual and potential human rights impacts that the business enterprise may cause or contribute to through its own activities, or which may be directly linked to its operations, products or services by its business relationships.

As part of the process and due to the persistent push from civil society stakeholders on Meta's involvement in creating an 'atmosphere of hate and fear in India', Meta undertook an Independent Impact Assessment of India. This process, which took well over one year, involved independent law firms speaking to stakeholders like us in order to provide assessments and recommendations to Meta. However, at the end of this process, Meta has covered up its adverse impact in a four-page summary. Therefore, as a stakeholder, we are compelled to release the actual findings that we shared with the independent law firm, and which were (assuredly) conveyed to Meta. Needless to say, we have also shared and provided this report to Meta officials well in advance of this release.

Insights:

Our report has found Meta platforms to be spaces for far-right organisations and hateful political actors to organize, gain momentum, gain support, peddle disinformation narratives and orchestrate lynch mobs and hate crimes. Meta has consistently failed to respect its users, allowing for instance pictures and addresses of inter-faith couples to be widely circulated, leading to their doxing.

In non-adversarial proceedings in India in a public hearing, Meta’s officials failed to provide a clear and reliable answer on its content moderation policy and respect for Indian laws and fundamental rights (see excerpts from the proceedings and link to the YouTube video of the hearing on pg. 6 and 7 of the full report).

We respond by unequivocally saying: The risk of and actual adverse human rights impacts of Meta’s platform (product) in India are prominent. Alongside other civil society organisations, we have clearly communicated this to Meta on several occasions in the last years. In these communications, we identified specific of ‘actor-networks’ and ‘fan-pages’ inciting hate and calls for genocide. Yet, the risk and actual human rights adverse impacts in India emerges through and is amplified on Meta’s platform due to its business relations in India.